Evaluations Overview

Evaluation in machine learning is the process of determining a model’s performance via a metrics-driven analysis. Freeplay allows you to incorporate evaluations into your product development lifecycle in a way that is focused on your particular product context or domain. By defining appropriate evaluations for your specific use case, you gain insights that are far more valuable than generic industry benchmarks. Read more on our blog here. Freeplay supports four modes of evaluations that each work together:- Human evaluation: aka “data annotation” or “labeling”, where your team can easily review and score results

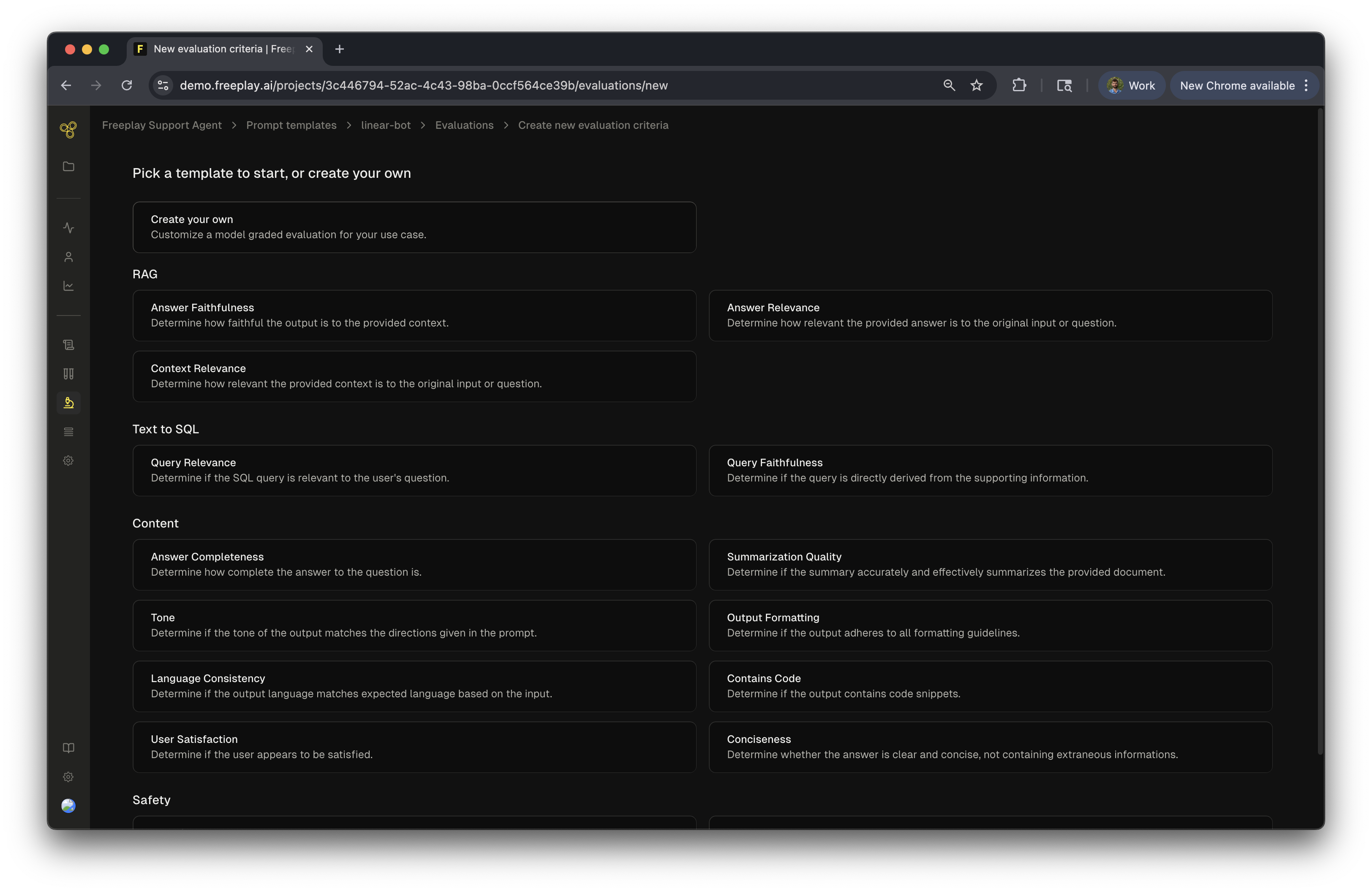

- Model-graded evaluation: using LLMs as a judge for nuanced evaluation criteria instead of humans

- Code evaluation: deterministic evaluation functions that run server-side on Freeplay’s servers or client-side in your own codebase to validate outputs against specific patterns, formats, and conditions

- Auto-categorization: automated tagging of your application logs with specified categories

Configuring Evaluation Criteria

For each of your prompts, you can configure one or more relevant human, model-graded, or code evaluation criteria in Freeplay. Any evaluation criteria configured in Freeplay can be used for human labeling/annotation, and you can optionally enable model-graded auto-evaluations for relevant criteria too. For example, you might want model-graded evals to score the quality of an LLM response, but you only want humans to be able to leave notes on a completion. Client-side code evaluations can be logged to Freeplay directly using our SDKs.Resources

What’s Next Now review each evaluation type and then move onto test runs once all your evaluations are configured!

- Code Evaluations

- Human Labeled Evaluations

- Model-Graded Evaluations

- Creating and Aligning Model-Graded Evals - Practical guide for building effective LLM judges

- Test Runs