The OpenAI Responses API is OpenAI’s latest API for generating completions. It supports text, images, tool calling, structured outputs, and more in a unified interface. Freeplay supports formatting prompts and recording completions with Responses API so you can use it seamlessly in your code.Documentation Index

Fetch the complete documentation index at: https://docs.freeplay.ai/llms.txt

Use this file to discover all available pages before exploring further.

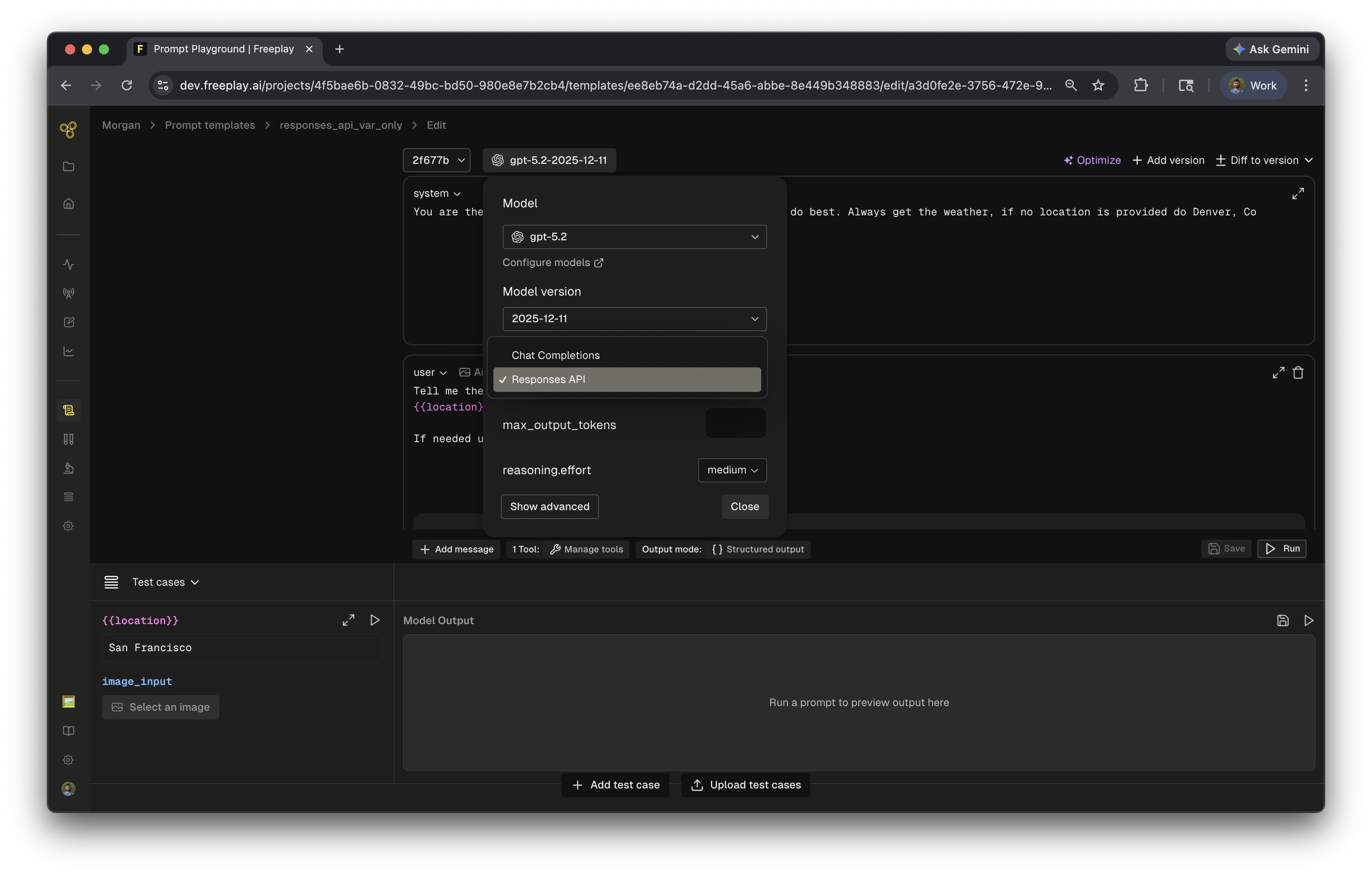

Setting up in the prompt playground

To use the Responses API with a prompt template in Freeplay:- Open your prompt template in the Prompt Playground

- Select a compatible OpenAI model (e.g.

gpt-5.x,gpt-4.x) - Open Model Settings

- Change the API Format to Responses

formatted_prompt.llm_prompt returns the input array expected by openai.responses.create() instead of the chat completions message format.

How it works

When the API Format is set to Responses API:formatted_prompt.llm_promptreturns theinputarray forresponses.create()formatted_prompt.system_contentreturns the system instructions (passed asinstructions)formatted_prompt.tool_schemareturns tools in the Responses API formatformatted_prompt.formatted_output_schemareturns the JSON schema for structured outputsformatted_prompt.prompt_info.model_parameterscontains model settings liketemperature

1. Setup clients

Initialize Freeplay and OpenAI client SDKs.2. Fetch prompt from Freeplay

Pull in the formatted prompt. Since the API Format is set to Responses API, the prompt is formatted accordingly.3. Build the Responses API call

Map the formatted prompt fields to the Responses API parameters —instructions, tools, structured output text format, etc.

4. Call OpenAI Responses API

Pass the formatted input and parameters toopenai.responses.create().

5. Handle the response

The Responses API returns anoutput array. Iterate through it to handle text outputs and tool calls.